commit

c516fcc1e7

11 changed files with 1084 additions and 761 deletions

54

README.md

54

README.md

|

|

@ -5,9 +5,11 @@ For more information on what Starlink is, see [starlink.com](https://www.starlin

|

|||

|

||||

## Prerequisites

|

||||

|

||||

Most of the scripts here are [Python](https://www.python.org/) scripts. To use them, you will either need Python installed on your system or you can use the Docker image. If you use the Docker image, you can skip the rest of the prerequisites other than making sure the dish IP is reachable and Docker itself. For Linux systems, the python package from your distribution should be fine, as long as it is Python 3. The JSON script should actually work with Python 2.7, but the grpc scripts all require Python 3 (and Python 2.7 is past end-of-life, so is not recommended anyway).

|

||||

|

||||

`parseJsonHistory.py` operates on a JSON format data representation of the protocol buffer messages, such as that output by [gRPCurl](https://github.com/fullstorydev/grpcurl). The command lines below assume `grpcurl` is installed in the runtime PATH. If that's not the case, just substitute in the full path to the command.

|

||||

|

||||

All the tools that pull data from the dish expect to be able to reach it at the dish's fixed IP address of 192.168.100.1, as do the Starlink [Android app](https://play.google.com/store/apps/details?id=com.starlink.mobile) and [iOS app](https://apps.apple.com/us/app/starlink/id1537177988). When using a router other than the one included with the Starlink installation kit, this usually requires some additional router configuration to make it work. That configuration is beyond the scope of this document, but if the Starlink app doesn't work on your home network, then neither will these scripts. That being said, you do not need the Starlink app installed to make use of these scripts.

|

||||

All the tools that pull data from the dish expect to be able to reach it at the dish's fixed IP address of 192.168.100.1, as do the Starlink [Android app](https://play.google.com/store/apps/details?id=com.starlink.mobile), [iOS app](https://apps.apple.com/us/app/starlink/id1537177988), and the browser app you can run directly from http://192.168.100.1. When using a router other than the one included with the Starlink installation kit, this usually requires some additional router configuration to make it work. That configuration is beyond the scope of this document, but if the Starlink app doesn't work on your home network, then neither will these scripts. That being said, you do not need the Starlink app installed to make use of these scripts.

|

||||

|

||||

The scripts that don't use `grpcurl` to pull data require the `grpcio` Python package at runtime and generating the necessary gRPC protocol code requires the `grpcio-tools` package. Information about how to install both can be found at https://grpc.io/docs/languages/python/quickstart/

|

||||

|

||||

|

|

@ -15,8 +17,14 @@ The scripts that use [MQTT](https://mqtt.org/) for output require the `paho-mqtt

|

|||

|

||||

The scripts that use [InfluxDB](https://www.influxdata.com/products/influxdb/) for output require the `influxdb` Python package. Information about how to install that can be found at https://github.com/influxdata/influxdb-python. Note that this is the (slightly) older version of the InfluxDB client Python module, not the InfluxDB 2.0 client. It can still be made to work with an InfluxDB 2.0 server, but doing so requires using `influx v1` [CLI commands](https://docs.influxdata.com/influxdb/v2.0/reference/cli/influx/v1/) on the server to map the 1.x username, password, and database names to their 2.0 equivalents.

|

||||

|

||||

Running the scripts within a [Docker](https://www.docker.com/) container requires Docker to be installed. Information about how to install that can be found at https://docs.docker.com/engine/install/

|

||||

|

||||

## Usage

|

||||

|

||||

Of the 3 groups below, the grpc scripts are really the only ones being actively developed. The others are mostly by way of example of what could be done with the underlying data.

|

||||

|

||||

### The JSON parser script

|

||||

|

||||

`parseJsonHistory.py` takes input from a file and writes its output to standard output. The easiest way to use it is to pipe the `grpcurl` command directly into it. For example:

|

||||

```

|

||||

grpcurl -plaintext -d {\"get_history\":{}} 192.168.100.1:9200 SpaceX.API.Device.Device/Handle | python parseJsonHistory.py

|

||||

|

|

@ -28,7 +36,11 @@ python parseJsonHistory.py -h

|

|||

|

||||

When used as-is, `parseJsonHistory.py` will summarize packet loss information from the data the dish records. There's other bits of data in there, though, so that script (or more likely the parsing logic it uses, which now resides in `starlink_json.py`) could be used as a starting point or example of how to iterate through it. Most of the data displayed in the Statistics page of the Starlink app appears to come from this same `get_history` gRPC response. See the file `get_history_notes.txt` for some ramblings on how to interpret it.

|

||||

|

||||

The other scripts can do the gRPC communication directly, but they require some generated code to support the specific gRPC protocol messages used. These would normally be generated from .proto files that specify those messages, but to date (2020-Dec), SpaceX has not publicly released such files. The gRPC service running on the dish appears to have [server reflection](https://github.com/grpc/grpc/blob/master/doc/server-reflection.md) enabled, though. `grpcurl` can use that to extract a protoset file, and the `protoc` compiler can use that to make the necessary generated code:

|

||||

The one bit of functionality this script has over the grpc scripts is that it supports capturing the grpcurl output to a file and reading from that, which may be useful if you're collecting data in one place but analyzing it in another. Otherwise, it's probably better to use `dishHistoryStats.py`, described below.

|

||||

|

||||

### The grpc scripts

|

||||

|

||||

This set of scripts can do the gRPC communication directly, but they require some generated code to support the specific gRPC protocol messages used. These would normally be generated from .proto files that specify those messages, but to date (2020-Dec), SpaceX has not publicly released such files. The gRPC service running on the dish appears to have [server reflection](https://github.com/grpc/grpc/blob/master/doc/server-reflection.md) enabled, though. `grpcurl` can use that to extract a protoset file, and the `protoc` compiler can use that to make the necessary generated code:

|

||||

```

|

||||

grpcurl -plaintext -protoset-out dish.protoset 192.168.100.1:9200 describe SpaceX.API.Device.Device

|

||||

mkdir src

|

||||

|

|

@ -41,29 +53,47 @@ python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=.

|

|||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/wifi.proto

|

||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/wifi_config.proto

|

||||

```

|

||||

Then move the resulting files to where the Python scripts can find them.

|

||||

Then move the resulting files to where the Python scripts can find them in the import path, such as in the same directory as the scripts themselves.

|

||||

|

||||

Once those are available, the `dishHistoryStats.py` script can be used in place of the `grpcurl | parseJsonHistory.py` pipeline, with most of the same command line options.

|

||||

Once those are available, the `dishHistoryStats.py` script can be used in place of the `grpcurl | parseJsonHistory.py` pipeline, with most of the same command line options. For example:

|

||||

```

|

||||

python3 parseHistoryStats.py

|

||||

```

|

||||

|

||||

To collect and record summary stats every hour, you can put something like the following in your user crontab:

|

||||

By default, `parseHistoryStats.py` (and `parseJsonHistory.py`) will output the stats in CSV format. You can use the `-v` option to instead output in a (slightly) more human-readable format.

|

||||

|

||||

To collect and record summary stats at the top of every hour, you could put something like the following in your user crontab (assuming you have moved the scripts to ~/bin and made them executable):

|

||||

```

|

||||

00 * * * * [ -e ~/dishStats.csv ] || ~/bin/dishHistoryStats.py -H >~/dishStats.csv; ~/bin/dishHistoryStats.py >>~/dishStats.csv

|

||||

```

|

||||

|

||||

`dishHistoryInflux.py` and `dishHistoryMqtt.py` are similar, but they send their output to an InfluxDB server and a MQTT broker, respectively. Run them with `-h` command line option for details on how to specify server and/or database options.

|

||||

|

||||

`dishDumpStatus.py` is even simpler. Just run it as:

|

||||

`dishStatusCsv.py`, `dishStatusInflux.py`, and `dishStatusMqtt.py` output the status data instead of history data, to various data backends. The information they pull is mostly what appears related to the dish in the Debug Data section of the Starlink app. As with the corresponding history scripts, run them with `-h` command line option for usage details.

|

||||

|

||||

By default, all of these scripts will pull data once, send it off to the specified data backend, and then exit. They can instead be made to run in a periodic loop by passing a `-t` option to specify loop interval, in seconds. For example, to capture status information to a InfluxDB server every 30 seconds, you could do something like this:

|

||||

```

|

||||

python3 dishStatusInflux.py -t 30 [... probably other args to specify server options ...]

|

||||

```

|

||||

|

||||

Some of the scripts (currently only the InfluxDB ones) also support specifying options through environment variables. See details in the scripts for the environment variables that map to options.

|

||||

|

||||

### Other scripts

|

||||

|

||||

`dishDumpStatus.py` is a simple example of how to use the grpc modules (the ones generated by protoc, not `starlink_grpc.py`) directly. Just run it as:

|

||||

```

|

||||

python3 dishDumpStatus.py

|

||||

```

|

||||

and revel in copious amounts of dish status information. OK, maybe it's not as impressive as all that. This one is really just meant to be a starting point for real functionality to be added to it. The information this script pulls is mostly what appears related to the dish in the Debug Data section of the Starlink app.

|

||||

and revel in copious amounts of dish status information. OK, maybe it's not as impressive as all that. This one is really just meant to be a starting point for real functionality to be added to it.

|

||||

|

||||

`dishStatusCsv.py`, `dishStatusInflux.py`, and `dishStatusMqtt.py` output the same status data, but to various data backends. As with the corresponding history scripts, run them with `-h` command line option for usage details.

|

||||

Possibly more simple examples to come, as the other scripts have started getting a bit complicated.

|

||||

|

||||

## To Be Done (Maybe)

|

||||

|

||||

There are `reboot` and `dish_stow` requests in the Device protocol, too, so it should be trivial to write a command that initiates dish reboot and stow operations. These are easy enough to do with `grpcurl`, though, as there is no need to parse through the response data. For that matter, they're easy enough to do with the Starlink app.

|

||||

|

||||

Proper Python packaging, since some of the scripts are no longer self-contained.

|

||||

|

||||

## Other Tidbits

|

||||

|

||||

The Starlink Android app actually uses port 9201 instead of 9200. Both appear to expose the same gRPC service, but the one on port 9201 uses an HTTP/1.1 wrapper, whereas the one on port 9200 uses HTTP/2.0, which is what most gRPC tools expect.

|

||||

|

|

@ -75,7 +105,7 @@ The Starlink router also exposes a gRPC service, on ports 9000 (HTTP/2.0) and 90

|

|||

Initialization of the container can be performed with the following command:

|

||||

|

||||

```

|

||||

docker run -d --name='starlink-grpc-tools' -e INFLUXDB_HOST={InfluxDB Hostname} \

|

||||

docker run -d -t --name='starlink-grpc-tools' -e INFLUXDB_HOST={InfluxDB Hostname} \

|

||||

-e INFLUXDB_PORT={Port, 8086 usually} \

|

||||

-e INFLUXDB_USER={Optional, InfluxDB Username} \

|

||||

-e INFLUXDB_PWD={Optional, InfluxDB Password} \

|

||||

|

|

@ -83,6 +113,10 @@ docker run -d --name='starlink-grpc-tools' -e INFLUXDB_HOST={InfluxDB Hostname}

|

|||

neurocis/starlink-grpc-tools dishStatusInflux.py -v

|

||||

```

|

||||

|

||||

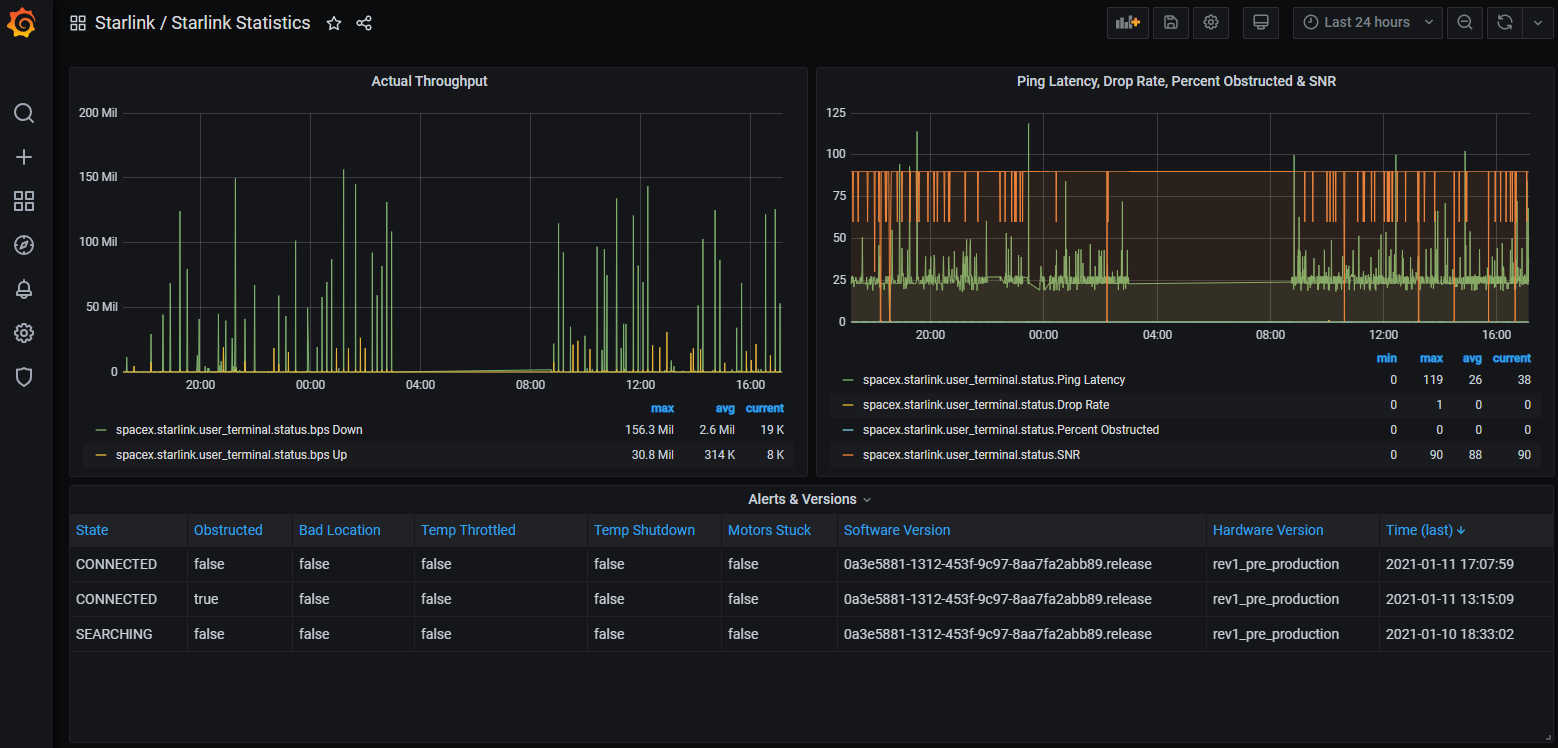

`dishStatusInflux.py -v` is optional and will run same but not -verbose, or you can replace it with one of the other scripts if you wish to run that instead. There is also an `GrafanaDashboard - Starlink Statistics.json` which can be imported to get some charts like:

|

||||

The `-t` option to `docker run` will prevent Python from buffering the script's standard output and can be omitted if you don't care about seeing the verbose output in the container logs as soon as it is printed.

|

||||

|

||||

The `dishStatusInflux.py -v` is optional and omitting it will run same but not verbose, or you can replace it with one of the other scripts if you wish to run that instead, or use other command line options. There is also a `GrafanaDashboard - Starlink Statistics.json` which can be imported to get some charts like:

|

||||

|

||||

|

||||

|

||||

You'll probably want to run with the `-t` option to `dishStatusInflux.py` to collect status information periodically for this to be meaningful.

|

||||

|

|

|

|||

|

|

@ -10,57 +10,73 @@

|

|||

#

|

||||

######################################################################

|

||||

|

||||

import datetime

|

||||

import os

|

||||

import sys

|

||||

import getopt

|

||||

import datetime

|

||||

import logging

|

||||

|

||||

import os

|

||||

import signal

|

||||

import sys

|

||||

import time

|

||||

import warnings

|

||||

|

||||

from influxdb import InfluxDBClient

|

||||

|

||||

import starlink_grpc

|

||||

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "ahn:p:rs:vC:D:IP:R:SU:")

|

||||

except getopt.GetoptError as err:

|

||||

class Terminated(Exception):

|

||||

pass

|

||||

|

||||

|

||||

def handle_sigterm(signum, frame):

|

||||

# Turn SIGTERM into an exception so main loop can clean up

|

||||

raise Terminated()

|

||||

|

||||

|

||||

def main():

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "ahn:p:rs:t:vC:D:IP:R:SU:")

|

||||

except getopt.GetoptError as err:

|

||||

print(str(err))

|

||||

arg_error = True

|

||||

|

||||

# Default to 1 hour worth of data samples.

|

||||

samples_default = 3600

|

||||

samples = samples_default

|

||||

print_usage = False

|

||||

verbose = False

|

||||

run_lengths = False

|

||||

host_default = "localhost"

|

||||

database_default = "starlinkstats"

|

||||

icargs = {"host": host_default, "timeout": 5, "database": database_default}

|

||||

rp = None

|

||||

# Default to 1 hour worth of data samples.

|

||||

samples_default = 3600

|

||||

samples = None

|

||||

print_usage = False

|

||||

verbose = False

|

||||

default_loop_time = 0

|

||||

loop_time = default_loop_time

|

||||

run_lengths = False

|

||||

host_default = "localhost"

|

||||

database_default = "starlinkstats"

|

||||

icargs = {"host": host_default, "timeout": 5, "database": database_default}

|

||||

rp = None

|

||||

flush_limit = 6

|

||||

|

||||

# For each of these check they are both set and not empty string

|

||||

influxdb_host = os.environ.get("INFLUXDB_HOST")

|

||||

if influxdb_host:

|

||||

# For each of these check they are both set and not empty string

|

||||

influxdb_host = os.environ.get("INFLUXDB_HOST")

|

||||

if influxdb_host:

|

||||

icargs["host"] = influxdb_host

|

||||

influxdb_port = os.environ.get("INFLUXDB_PORT")

|

||||

if influxdb_port:

|

||||

influxdb_port = os.environ.get("INFLUXDB_PORT")

|

||||

if influxdb_port:

|

||||

icargs["port"] = int(influxdb_port)

|

||||

influxdb_user = os.environ.get("INFLUXDB_USER")

|

||||

if influxdb_user:

|

||||

influxdb_user = os.environ.get("INFLUXDB_USER")

|

||||

if influxdb_user:

|

||||

icargs["username"] = influxdb_user

|

||||

influxdb_pwd = os.environ.get("INFLUXDB_PWD")

|

||||

if influxdb_pwd:

|

||||

influxdb_pwd = os.environ.get("INFLUXDB_PWD")

|

||||

if influxdb_pwd:

|

||||

icargs["password"] = influxdb_pwd

|

||||

influxdb_db = os.environ.get("INFLUXDB_DB")

|

||||

if influxdb_db:

|

||||

influxdb_db = os.environ.get("INFLUXDB_DB")

|

||||

if influxdb_db:

|

||||

icargs["database"] = influxdb_db

|

||||

influxdb_rp = os.environ.get("INFLUXDB_RP")

|

||||

if influxdb_rp:

|

||||

influxdb_rp = os.environ.get("INFLUXDB_RP")

|

||||

if influxdb_rp:

|

||||

rp = influxdb_rp

|

||||

influxdb_ssl = os.environ.get("INFLUXDB_SSL")

|

||||

if influxdb_ssl:

|

||||

influxdb_ssl = os.environ.get("INFLUXDB_SSL")

|

||||

if influxdb_ssl:

|

||||

icargs["ssl"] = True

|

||||

if influxdb_ssl.lower() == "secure":

|

||||

icargs["verify_ssl"] = True

|

||||

|

|

@ -69,7 +85,7 @@ if influxdb_ssl:

|

|||

else:

|

||||

icargs["verify_ssl"] = influxdb_ssl

|

||||

|

||||

if not arg_error:

|

||||

if not arg_error:

|

||||

if len(args) > 0:

|

||||

arg_error = True

|

||||

else:

|

||||

|

|

@ -86,6 +102,8 @@ if not arg_error:

|

|||

run_lengths = True

|

||||

elif opt == "-s":

|

||||

samples = int(arg)

|

||||

elif opt == "-t":

|

||||

loop_time = float(arg)

|

||||

elif opt == "-v":

|

||||

verbose = True

|

||||

elif opt == "-C":

|

||||

|

|

@ -106,11 +124,11 @@ if not arg_error:

|

|||

elif opt == "-U":

|

||||

icargs["username"] = arg

|

||||

|

||||

if "password" in icargs and "username" not in icargs:

|

||||

if "password" in icargs and "username" not in icargs:

|

||||

print("Password authentication requires username to be set")

|

||||

arg_error = True

|

||||

|

||||

if print_usage or arg_error:

|

||||

if print_usage or arg_error:

|

||||

print("Usage: " + sys.argv[0] + " [options...]")

|

||||

print("Options:")

|

||||

print(" -a: Parse all valid samples")

|

||||

|

|

@ -118,7 +136,10 @@ if print_usage or arg_error:

|

|||

print(" -n <name>: Hostname of InfluxDB server, default: " + host_default)

|

||||

print(" -p <num>: Port number to use on InfluxDB server")

|

||||

print(" -r: Include ping drop run length stats")

|

||||

print(" -s <num>: Number of data samples to parse, default: " + str(samples_default))

|

||||

print(" -s <num>: Number of data samples to parse, default: loop interval,")

|

||||

print(" if set, else " + str(samples_default))

|

||||

print(" -t <num>: Loop interval in seconds or 0 for no loop, default: " +

|

||||

str(default_loop_time))

|

||||

print(" -v: Be verbose")

|

||||

print(" -C <filename>: Enable SSL/TLS using specified CA cert to verify server")

|

||||

print(" -D <name>: Database name to use, default: " + database_default)

|

||||

|

|

@ -129,25 +150,59 @@ if print_usage or arg_error:

|

|||

print(" -U <name>: Set username for authentication")

|

||||

sys.exit(1 if arg_error else 0)

|

||||

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

if samples is None:

|

||||

samples = int(loop_time) if loop_time > 0 else samples_default

|

||||

|

||||

try:

|

||||

dish_id = starlink_grpc.get_id()

|

||||

except starlink_grpc.GrpcError as e:

|

||||

logging.error("Failure getting dish ID: " + str(e))

|

||||

sys.exit(1)

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

|

||||

timestamp = datetime.datetime.utcnow()

|

||||

class GlobalState:

|

||||

pass

|

||||

|

||||

try:

|

||||

gstate = GlobalState()

|

||||

gstate.dish_id = None

|

||||

gstate.points = []

|

||||

|

||||

def conn_error(msg, *args):

|

||||

# Connection errors that happen in an interval loop are not critical

|

||||

# failures, but are interesting enough to print in non-verbose mode.

|

||||

if loop_time > 0:

|

||||

print(msg % args)

|

||||

else:

|

||||

logging.error(msg, *args)

|

||||

|

||||

def flush_points(client):

|

||||

try:

|

||||

client.write_points(gstate.points, retention_policy=rp)

|

||||

if verbose:

|

||||

print("Data points written: " + str(len(gstate.points)))

|

||||

gstate.points.clear()

|

||||

except Exception as e:

|

||||

conn_error("Failed writing to InfluxDB database: %s", str(e))

|

||||

return 1

|

||||

|

||||

return 0

|

||||

|

||||

def loop_body(client):

|

||||

if gstate.dish_id is None:

|

||||

try:

|

||||

gstate.dish_id = starlink_grpc.get_id()

|

||||

if verbose:

|

||||

print("Using dish ID: " + gstate.dish_id)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

conn_error("Failure getting dish ID: %s", str(e))

|

||||

return 1

|

||||

|

||||

timestamp = datetime.datetime.utcnow()

|

||||

|

||||

try:

|

||||

g_stats, pd_stats, rl_stats = starlink_grpc.history_ping_stats(samples, verbose)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

logging.error("Failure getting ping stats: " + str(e))

|

||||

sys.exit(1)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

conn_error("Failure getting ping stats: %s", str(e))

|

||||

return 1

|

||||

|

||||

all_stats = g_stats.copy()

|

||||

all_stats.update(pd_stats)

|

||||

if run_lengths:

|

||||

all_stats = g_stats.copy()

|

||||

all_stats.update(pd_stats)

|

||||

if run_lengths:

|

||||

for k, v in rl_stats.items():

|

||||

if k.startswith("run_"):

|

||||

for i, subv in enumerate(v, start=1):

|

||||

|

|

@ -155,24 +210,47 @@ if run_lengths:

|

|||

else:

|

||||

all_stats[k] = v

|

||||

|

||||

points = [{

|

||||

gstate.points.append({

|

||||

"measurement": "spacex.starlink.user_terminal.ping_stats",

|

||||

"tags": {"id": dish_id},

|

||||

"tags": {

|

||||

"id": gstate.dish_id

|

||||

},

|

||||

"time": timestamp,

|

||||

"fields": all_stats,

|

||||

}]

|

||||

})

|

||||

if verbose:

|

||||

print("Data points queued: " + str(len(gstate.points)))

|

||||

|

||||

if "verify_ssl" in icargs and not icargs["verify_ssl"]:

|

||||

if len(gstate.points) >= flush_limit:

|

||||

return flush_points(client)

|

||||

|

||||

return 0

|

||||

|

||||

if "verify_ssl" in icargs and not icargs["verify_ssl"]:

|

||||

# user has explicitly said be insecure, so don't warn about it

|

||||

warnings.filterwarnings("ignore", message="Unverified HTTPS request")

|

||||

|

||||

influx_client = InfluxDBClient(**icargs)

|

||||

try:

|

||||

influx_client.write_points(points, retention_policy=rp)

|

||||

rc = 0

|

||||

except Exception as e:

|

||||

logging.error("Failed writing to InfluxDB database: " + str(e))

|

||||

rc = 1

|

||||

finally:

|

||||

signal.signal(signal.SIGTERM, handle_sigterm)

|

||||

influx_client = InfluxDBClient(**icargs)

|

||||

try:

|

||||

next_loop = time.monotonic()

|

||||

while True:

|

||||

rc = loop_body(influx_client)

|

||||

if loop_time > 0:

|

||||

now = time.monotonic()

|

||||

next_loop = max(next_loop + loop_time, now)

|

||||

time.sleep(next_loop - now)

|

||||

else:

|

||||

break

|

||||

except Terminated:

|

||||

pass

|

||||

finally:

|

||||

if gstate.points:

|

||||

rc = flush_points(influx_client)

|

||||

influx_client.close()

|

||||

sys.exit(rc)

|

||||

|

||||

sys.exit(rc)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

main()

|

||||

|

|

|

|||

|

|

@ -10,9 +10,10 @@

|

|||

#

|

||||

######################################################################

|

||||

|

||||

import sys

|

||||

import getopt

|

||||

import logging

|

||||

import sys

|

||||

import time

|

||||

|

||||

try:

|

||||

import ssl

|

||||

|

|

@ -24,26 +25,30 @@ import paho.mqtt.publish

|

|||

|

||||

import starlink_grpc

|

||||

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "ahn:p:rs:vC:ISP:U:")

|

||||

except getopt.GetoptError as err:

|

||||

def main():

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "ahn:p:rs:t:vC:ISP:U:")

|

||||

except getopt.GetoptError as err:

|

||||

print(str(err))

|

||||

arg_error = True

|

||||

|

||||

# Default to 1 hour worth of data samples.

|

||||

samples_default = 3600

|

||||

samples = samples_default

|

||||

print_usage = False

|

||||

verbose = False

|

||||

run_lengths = False

|

||||

host_default = "localhost"

|

||||

mqargs = {"hostname": host_default}

|

||||

username = None

|

||||

password = None

|

||||

# Default to 1 hour worth of data samples.

|

||||

samples_default = 3600

|

||||

samples = None

|

||||

print_usage = False

|

||||

verbose = False

|

||||

default_loop_time = 0

|

||||

loop_time = default_loop_time

|

||||

run_lengths = False

|

||||

host_default = "localhost"

|

||||

mqargs = {"hostname": host_default}

|

||||

username = None

|

||||

password = None

|

||||

|

||||

if not arg_error:

|

||||

if not arg_error:

|

||||

if len(args) > 0:

|

||||

arg_error = True

|

||||

else:

|

||||

|

|

@ -60,6 +65,8 @@ if not arg_error:

|

|||

run_lengths = True

|

||||

elif opt == "-s":

|

||||

samples = int(arg)

|

||||

elif opt == "-t":

|

||||

loop_time = float(arg)

|

||||

elif opt == "-v":

|

||||

verbose = True

|

||||

elif opt == "-C":

|

||||

|

|

@ -77,11 +84,11 @@ if not arg_error:

|

|||

elif opt == "-U":

|

||||

username = arg

|

||||

|

||||

if username is None and password is not None:

|

||||

if username is None and password is not None:

|

||||

print("Password authentication requires username to be set")

|

||||

arg_error = True

|

||||

|

||||

if print_usage or arg_error:

|

||||

if print_usage or arg_error:

|

||||

print("Usage: " + sys.argv[0] + " [options...]")

|

||||

print("Options:")

|

||||

print(" -a: Parse all valid samples")

|

||||

|

|

@ -89,7 +96,10 @@ if print_usage or arg_error:

|

|||

print(" -n <name>: Hostname of MQTT broker, default: " + host_default)

|

||||

print(" -p <num>: Port number to use on MQTT broker")

|

||||

print(" -r: Include ping drop run length stats")

|

||||

print(" -s <num>: Number of data samples to parse, default: " + str(samples_default))

|

||||

print(" -s <num>: Number of data samples to parse, default: loop interval,")

|

||||

print(" if set, else " + str(samples_default))

|

||||

print(" -t <num>: Loop interval in seconds or 0 for no loop, default: " +

|

||||

str(default_loop_time))

|

||||

print(" -v: Be verbose")

|

||||

print(" -C <filename>: Enable SSL/TLS using specified CA cert to verify broker")

|

||||

print(" -I: Enable SSL/TLS but disable certificate verification (INSECURE!)")

|

||||

|

|

@ -98,37 +108,78 @@ if print_usage or arg_error:

|

|||

print(" -U: Set username for authentication")

|

||||

sys.exit(1 if arg_error else 0)

|

||||

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

if samples is None:

|

||||

samples = int(loop_time) if loop_time > 0 else samples_default

|

||||

|

||||

try:

|

||||

dish_id = starlink_grpc.get_id()

|

||||

except starlink_grpc.GrpcError as e:

|

||||

logging.error("Failure getting dish ID: " + str(e))

|

||||

sys.exit(1)

|

||||

if username is not None:

|

||||

mqargs["auth"] = {"username": username}

|

||||

if password is not None:

|

||||

mqargs["auth"]["password"] = password

|

||||

|

||||

try:

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

|

||||

class GlobalState:

|

||||

pass

|

||||

|

||||

gstate = GlobalState()

|

||||

gstate.dish_id = None

|

||||

|

||||

def conn_error(msg, *args):

|

||||

# Connection errors that happen in an interval loop are not critical

|

||||

# failures, but are interesting enough to print in non-verbose mode.

|

||||

if loop_time > 0:

|

||||

print(msg % args)

|

||||

else:

|

||||

logging.error(msg, *args)

|

||||

|

||||

def loop_body():

|

||||

if gstate.dish_id is None:

|

||||

try:

|

||||

gstate.dish_id = starlink_grpc.get_id()

|

||||

if verbose:

|

||||

print("Using dish ID: " + gstate.dish_id)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

conn_error("Failure getting dish ID: %s", str(e))

|

||||

return 1

|

||||

|

||||

try:

|

||||

g_stats, pd_stats, rl_stats = starlink_grpc.history_ping_stats(samples, verbose)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

logging.error("Failure getting ping stats: " + str(e))

|

||||

sys.exit(1)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

conn_error("Failure getting ping stats: %s", str(e))

|

||||

return 1

|

||||

|

||||

topic_prefix = "starlink/dish_ping_stats/" + dish_id + "/"

|

||||

msgs = [(topic_prefix + k, v, 0, False) for k, v in g_stats.items()]

|

||||

msgs.extend([(topic_prefix + k, v, 0, False) for k, v in pd_stats.items()])

|

||||

if run_lengths:

|

||||

topic_prefix = "starlink/dish_ping_stats/" + gstate.dish_id + "/"

|

||||

msgs = [(topic_prefix + k, v, 0, False) for k, v in g_stats.items()]

|

||||

msgs.extend([(topic_prefix + k, v, 0, False) for k, v in pd_stats.items()])

|

||||

if run_lengths:

|

||||

for k, v in rl_stats.items():

|

||||

if k.startswith("run_"):

|

||||

msgs.append((topic_prefix + k, ",".join(str(x) for x in v), 0, False))

|

||||

else:

|

||||

msgs.append((topic_prefix + k, v, 0, False))

|

||||

|

||||

if username is not None:

|

||||

mqargs["auth"] = {"username": username}

|

||||

if password is not None:

|

||||

mqargs["auth"]["password"] = password

|

||||

try:

|

||||

paho.mqtt.publish.multiple(msgs, client_id=gstate.dish_id, **mqargs)

|

||||

if verbose:

|

||||

print("Successfully published to MQTT broker")

|

||||

except Exception as e:

|

||||

conn_error("Failed publishing to MQTT broker: %s", str(e))

|

||||

return 1

|

||||

|

||||

try:

|

||||

paho.mqtt.publish.multiple(msgs, client_id=dish_id, **mqargs)

|

||||

except Exception as e:

|

||||

logging.error("Failed publishing to MQTT broker: " + str(e))

|

||||

sys.exit(1)

|

||||

return 0

|

||||

|

||||

next_loop = time.monotonic()

|

||||

while True:

|

||||

rc = loop_body()

|

||||

if loop_time > 0:

|

||||

now = time.monotonic()

|

||||

next_loop = max(next_loop + loop_time, now)

|

||||

time.sleep(next_loop - now)

|

||||

else:

|

||||

break

|

||||

|

||||

sys.exit(rc)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

main()

|

||||

|

|

|

|||

|

|

@ -11,29 +11,34 @@

|

|||

######################################################################

|

||||

|

||||

import datetime

|

||||

import sys

|

||||

import getopt

|

||||

import logging

|

||||

import sys

|

||||

import time

|

||||

|

||||

import starlink_grpc

|

||||

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "ahrs:vH")

|

||||

except getopt.GetoptError as err:

|

||||

def main():

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "ahrs:t:vH")

|

||||

except getopt.GetoptError as err:

|

||||

print(str(err))

|

||||

arg_error = True

|

||||

|

||||

# Default to 1 hour worth of data samples.

|

||||

samples_default = 3600

|

||||

samples = samples_default

|

||||

print_usage = False

|

||||

verbose = False

|

||||

print_header = False

|

||||

run_lengths = False

|

||||

# Default to 1 hour worth of data samples.

|

||||

samples_default = 3600

|

||||

samples = None

|

||||

print_usage = False

|

||||

verbose = False

|

||||

default_loop_time = 0

|

||||

loop_time = default_loop_time

|

||||

run_lengths = False

|

||||

print_header = False

|

||||

|

||||

if not arg_error:

|

||||

if not arg_error:

|

||||

if len(args) > 0:

|

||||

arg_error = True

|

||||

else:

|

||||

|

|

@ -46,27 +51,35 @@ if not arg_error:

|

|||

run_lengths = True

|

||||

elif opt == "-s":

|

||||

samples = int(arg)

|

||||

elif opt == "-t":

|

||||

loop_time = float(arg)

|

||||

elif opt == "-v":

|

||||

verbose = True

|

||||

elif opt == "-H":

|

||||

print_header = True

|

||||

|

||||

if print_usage or arg_error:

|

||||

if print_usage or arg_error:

|

||||

print("Usage: " + sys.argv[0] + " [options...]")

|

||||

print("Options:")

|

||||

print(" -a: Parse all valid samples")

|

||||

print(" -h: Be helpful")

|

||||

print(" -r: Include ping drop run length stats")

|

||||

print(" -s <num>: Number of data samples to parse, default: " + str(samples_default))

|

||||

print(" -s <num>: Number of data samples to parse, default: loop interval,")

|

||||

print(" if set, else " + str(samples_default))

|

||||

print(" -t <num>: Loop interval in seconds or 0 for no loop, default: " +

|

||||

str(default_loop_time))

|

||||

print(" -v: Be verbose")

|

||||

print(" -H: print CSV header instead of parsing file")

|

||||

print(" -H: print CSV header instead of parsing history data")

|

||||

sys.exit(1 if arg_error else 0)

|

||||

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

if samples is None:

|

||||

samples = int(loop_time) if loop_time > 0 else samples_default

|

||||

|

||||

g_fields, pd_fields, rl_fields = starlink_grpc.history_ping_field_names()

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

|

||||

if print_header:

|

||||

g_fields, pd_fields, rl_fields = starlink_grpc.history_ping_field_names()

|

||||

|

||||

if print_header:

|

||||

header = ["datetimestamp_utc"]

|

||||

header.extend(g_fields)

|

||||

header.extend(pd_fields)

|

||||

|

|

@ -79,15 +92,16 @@ if print_header:

|

|||

print(",".join(header))

|

||||

sys.exit(0)

|

||||

|

||||

timestamp = datetime.datetime.utcnow()

|

||||

def loop_body():

|

||||

timestamp = datetime.datetime.utcnow()

|

||||

|

||||

try:

|

||||

try:

|

||||

g_stats, pd_stats, rl_stats = starlink_grpc.history_ping_stats(samples, verbose)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

logging.error("Failure getting ping stats: " + str(e))

|

||||

sys.exit(1)

|

||||

except starlink_grpc.GrpcError as e:

|

||||

logging.error("Failure getting ping stats: %s", str(e))

|

||||

return 1

|

||||

|

||||

if verbose:

|

||||

if verbose:

|

||||

print("Parsed samples: " + str(g_stats["samples"]))

|

||||

print("Total ping drop: " + str(pd_stats["total_ping_drop"]))

|

||||

print("Count of drop == 1: " + str(pd_stats["count_full_ping_drop"]))

|

||||

|

|

@ -100,9 +114,13 @@ if verbose:

|

|||

if run_lengths:

|

||||

print("Initial drop run fragment: " + str(rl_stats["init_run_fragment"]))

|

||||

print("Final drop run fragment: " + str(rl_stats["final_run_fragment"]))

|

||||

print("Per-second drop runs: " + ", ".join(str(x) for x in rl_stats["run_seconds"]))

|

||||

print("Per-minute drop runs: " + ", ".join(str(x) for x in rl_stats["run_minutes"]))

|

||||

else:

|

||||

print("Per-second drop runs: " +

|

||||

", ".join(str(x) for x in rl_stats["run_seconds"]))

|

||||

print("Per-minute drop runs: " +

|

||||

", ".join(str(x) for x in rl_stats["run_minutes"]))

|

||||

if loop_time > 0:

|

||||

print()

|

||||

else:

|

||||

csv_data = [timestamp.replace(microsecond=0).isoformat()]

|

||||

csv_data.extend(str(g_stats[field]) for field in g_fields)

|

||||

csv_data.extend(str(pd_stats[field]) for field in pd_fields)

|

||||

|

|

@ -113,3 +131,21 @@ else:

|

|||

else:

|

||||

csv_data.append(str(rl_stats[field]))

|

||||

print(",".join(csv_data))

|

||||

|

||||

return 0

|

||||

|

||||

next_loop = time.monotonic()

|

||||

while True:

|

||||

rc = loop_body()

|

||||

if loop_time > 0:

|

||||

now = time.monotonic()

|

||||

next_loop = max(next_loop + loop_time, now)

|

||||

time.sleep(next_loop - now)

|

||||

else:

|

||||

break

|

||||

|

||||

sys.exit(rc)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

main()

|

||||

|

|

|

|||

102

dishStatusCsv.py

102

dishStatusCsv.py

|

|

@ -1,53 +1,63 @@

|

|||

#!/usr/bin/python3

|

||||

######################################################################

|

||||

#

|

||||

# Output get_status info in CSV format.

|

||||

# Output Starlink user terminal status info in CSV format.

|

||||

#

|

||||

# This script pulls the current status once and prints to stdout.

|

||||

# This script pulls the current status and prints to stdout either

|

||||

# once or in a periodic loop.

|

||||

#

|

||||

######################################################################

|

||||

|

||||

import datetime

|

||||

import sys

|

||||

import getopt

|

||||

import logging

|

||||

import sys

|

||||

import time

|

||||

|

||||

import grpc

|

||||

|

||||

import spacex.api.device.device_pb2

|

||||

import spacex.api.device.device_pb2_grpc

|

||||

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "hH")

|

||||

except getopt.GetoptError as err:

|

||||

def main():

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "ht:H")

|

||||

except getopt.GetoptError as err:

|

||||

print(str(err))

|

||||

arg_error = True

|

||||

|

||||

print_usage = False

|

||||

print_header = False

|

||||

print_usage = False

|

||||

default_loop_time = 0

|

||||

loop_time = default_loop_time

|

||||

print_header = False

|

||||

|

||||

if not arg_error:

|

||||

if not arg_error:

|

||||

if len(args) > 0:

|

||||

arg_error = True

|

||||

else:

|

||||

for opt, arg in opts:

|

||||

if opt == "-h":

|

||||

print_usage = True

|

||||

elif opt == "-t":

|

||||

loop_time = float(arg)

|

||||

elif opt == "-H":

|

||||

print_header = True

|

||||

|

||||

if print_usage or arg_error:

|

||||

if print_usage or arg_error:

|

||||

print("Usage: " + sys.argv[0] + " [options...]")

|

||||

print("Options:")

|

||||

print(" -h: Be helpful")

|

||||

print(" -t <num>: Loop interval in seconds or 0 for no loop, default: " +

|

||||

str(default_loop_time))

|

||||

print(" -H: print CSV header instead of parsing file")

|

||||

sys.exit(1 if arg_error else 0)

|

||||

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

|

||||

if print_header:

|

||||

if print_header:

|

||||

header = [

|

||||

"datetimestamp_utc",

|

||||

"hardware_version",

|

||||

|

|

@ -63,38 +73,37 @@ if print_header:

|

|||

"alerts",

|

||||

"fraction_obstructed",

|

||||

"currently_obstructed",

|

||||

"seconds_obstructed"

|

||||

"seconds_obstructed",

|

||||

]

|

||||

header.extend("wedges_fraction_obstructed_" + str(x) for x in range(12))

|

||||

print(",".join(header))

|

||||

sys.exit(0)

|

||||

|

||||

try:

|

||||

def loop_body():

|

||||

timestamp = datetime.datetime.utcnow()

|

||||

|

||||

try:

|

||||

with grpc.insecure_channel("192.168.100.1:9200") as channel:

|

||||

stub = spacex.api.device.device_pb2_grpc.DeviceStub(channel)

|

||||

response = stub.Handle(spacex.api.device.device_pb2.Request(get_status={}))

|

||||

except grpc.RpcError:

|

||||

logging.error("Failed getting status info")

|

||||

sys.exit(1)

|

||||

|

||||

timestamp = datetime.datetime.utcnow()

|

||||

status = response.dish_get_status

|

||||

|

||||

status = response.dish_get_status

|

||||

|

||||

# More alerts may be added in future, so rather than list them individually,

|

||||

# build a bit field based on field numbers of the DishAlerts message.

|

||||

alert_bits = 0

|

||||

for alert in status.alerts.ListFields():

|

||||

# More alerts may be added in future, so rather than list them individually,

|

||||

# build a bit field based on field numbers of the DishAlerts message.

|

||||

alert_bits = 0

|

||||

for alert in status.alerts.ListFields():

|

||||

alert_bits |= (1 if alert[1] else 0) << (alert[0].number - 1)

|

||||

|

||||

csv_data = [

|

||||

csv_data = [

|

||||

timestamp.replace(microsecond=0).isoformat(),

|

||||

status.device_info.id,

|

||||

status.device_info.hardware_version,

|

||||

status.device_info.software_version,

|

||||

spacex.api.device.dish_pb2.DishState.Name(status.state)

|

||||

]

|

||||

csv_data.extend(str(x) for x in [

|

||||

spacex.api.device.dish_pb2.DishState.Name(status.state),

|

||||

]

|

||||

csv_data.extend(

|

||||

str(x) for x in [

|

||||

status.device_state.uptime_s,

|

||||

status.snr,

|

||||

status.seconds_to_first_nonempty_slot,

|

||||

|

|

@ -105,7 +114,34 @@ csv_data.extend(str(x) for x in [

|

|||

alert_bits,

|

||||

status.obstruction_stats.fraction_obstructed,

|

||||

status.obstruction_stats.currently_obstructed,

|

||||

status.obstruction_stats.last_24h_obstructed_s

|

||||

])

|

||||

csv_data.extend(str(x) for x in status.obstruction_stats.wedge_abs_fraction_obstructed)

|

||||

print(",".join(csv_data))

|

||||

status.obstruction_stats.last_24h_obstructed_s,

|

||||

])

|

||||

csv_data.extend(str(x) for x in status.obstruction_stats.wedge_abs_fraction_obstructed)

|

||||

rc = 0

|

||||

except grpc.RpcError:

|

||||

if loop_time <= 0:

|

||||

logging.error("Failed getting status info")

|

||||

csv_data = [

|

||||

timestamp.replace(microsecond=0).isoformat(), "", "", "", "DISH_UNREACHABLE"

|

||||

]

|

||||

rc = 1

|

||||

|

||||

print(",".join(csv_data))

|

||||

|

||||

return rc

|

||||

|

||||

next_loop = time.monotonic()

|

||||

while True:

|

||||

rc = loop_body()

|

||||

if loop_time > 0:

|

||||

now = time.monotonic()

|

||||

next_loop = max(next_loop + loop_time, now)

|

||||

time.sleep(next_loop - now)

|

||||

else:

|

||||

break

|

||||

|

||||

sys.exit(rc)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

main()

|

||||

|

|

|

|||

|

|

@ -8,59 +8,71 @@

|

|||

#

|

||||

######################################################################

|

||||

|

||||

import time

|

||||

import os

|

||||

import sys

|

||||

import getopt

|

||||

import logging

|

||||

import os

|

||||

import signal

|

||||

import sys

|

||||

import time

|

||||

import warnings

|

||||

|

||||

import grpc

|

||||

from influxdb import InfluxDBClient

|

||||

from influxdb import SeriesHelper

|

||||

|

||||

import grpc

|

||||

|

||||

import spacex.api.device.device_pb2

|

||||

import spacex.api.device.device_pb2_grpc

|

||||

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

class Terminated(Exception):

|

||||

pass

|

||||

|

||||

|

||||

def handle_sigterm(signum, frame):

|

||||

# Turn SIGTERM into an exception so main loop can clean up

|

||||

raise Terminated()

|

||||

|

||||

|

||||

def main():

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "hn:p:t:vC:D:IP:R:SU:")

|

||||

except getopt.GetoptError as err:

|

||||

except getopt.GetoptError as err:

|

||||

print(str(err))

|

||||

arg_error = True

|

||||

|

||||

print_usage = False

|

||||

verbose = False

|

||||

host_default = "localhost"

|

||||

database_default = "starlinkstats"

|

||||

icargs = {"host": host_default, "timeout": 5, "database": database_default}

|

||||

rp = None

|

||||

default_sleep_time = 30

|

||||

sleep_time = default_sleep_time

|

||||

print_usage = False

|

||||

verbose = False

|

||||

default_loop_time = 0

|

||||

loop_time = default_loop_time

|

||||

host_default = "localhost"

|

||||

database_default = "starlinkstats"

|

||||

icargs = {"host": host_default, "timeout": 5, "database": database_default}

|

||||

rp = None

|

||||

flush_limit = 6

|

||||

|

||||

# For each of these check they are both set and not empty string

|

||||

influxdb_host = os.environ.get("INFLUXDB_HOST")

|

||||

if influxdb_host:

|

||||

# For each of these check they are both set and not empty string

|

||||

influxdb_host = os.environ.get("INFLUXDB_HOST")

|

||||

if influxdb_host:

|

||||

icargs["host"] = influxdb_host

|

||||

influxdb_port = os.environ.get("INFLUXDB_PORT")

|

||||

if influxdb_port:

|

||||

influxdb_port = os.environ.get("INFLUXDB_PORT")

|

||||

if influxdb_port:

|

||||

icargs["port"] = int(influxdb_port)

|

||||

influxdb_user = os.environ.get("INFLUXDB_USER")

|

||||

if influxdb_user:

|

||||

influxdb_user = os.environ.get("INFLUXDB_USER")

|

||||

if influxdb_user:

|

||||

icargs["username"] = influxdb_user

|

||||

influxdb_pwd = os.environ.get("INFLUXDB_PWD")

|

||||

if influxdb_pwd:

|

||||

influxdb_pwd = os.environ.get("INFLUXDB_PWD")

|

||||

if influxdb_pwd:

|

||||

icargs["password"] = influxdb_pwd

|

||||

influxdb_db = os.environ.get("INFLUXDB_DB")

|

||||

if influxdb_db:

|

||||

influxdb_db = os.environ.get("INFLUXDB_DB")

|

||||

if influxdb_db:

|

||||

icargs["database"] = influxdb_db

|

||||

influxdb_rp = os.environ.get("INFLUXDB_RP")

|

||||

if influxdb_rp:

|

||||

influxdb_rp = os.environ.get("INFLUXDB_RP")

|

||||

if influxdb_rp:

|

||||

rp = influxdb_rp

|

||||

influxdb_ssl = os.environ.get("INFLUXDB_SSL")

|

||||

if influxdb_ssl:

|

||||

influxdb_ssl = os.environ.get("INFLUXDB_SSL")

|

||||

if influxdb_ssl:

|

||||

icargs["ssl"] = True

|

||||

if influxdb_ssl.lower() == "secure":

|

||||

icargs["verify_ssl"] = True

|

||||

|

|

@ -69,7 +81,7 @@ if influxdb_ssl:

|

|||

else:

|

||||

icargs["verify_ssl"] = influxdb_ssl

|

||||

|

||||

if not arg_error:

|

||||

if not arg_error:

|

||||

if len(args) > 0:

|

||||

arg_error = True

|

||||

else:

|

||||

|

|

@ -81,7 +93,7 @@ if not arg_error:

|

|||

elif opt == "-p":

|

||||

icargs["port"] = int(arg)

|

||||

elif opt == "-t":

|

||||

sleep_time = int(arg)

|

||||

loop_time = int(arg)

|

||||

elif opt == "-v":

|

||||

verbose = True

|

||||

elif opt == "-C":

|

||||

|

|

@ -102,18 +114,18 @@ if not arg_error:

|

|||

elif opt == "-U":

|

||||

icargs["username"] = arg

|

||||

|

||||

if "password" in icargs and "username" not in icargs:

|

||||

if "password" in icargs and "username" not in icargs:

|

||||

print("Password authentication requires username to be set")

|

||||

arg_error = True

|

||||

|

||||

if print_usage or arg_error:

|

||||

if print_usage or arg_error:

|

||||

print("Usage: " + sys.argv[0] + " [options...]")

|

||||

print("Options:")

|

||||

print(" -h: Be helpful")

|

||||

print(" -n <name>: Hostname of InfluxDB server, default: " + host_default)

|

||||

print(" -p <num>: Port number to use on InfluxDB server")

|

||||

print(" -t <num>: Loop interval in seconds or 0 for no loop, default: " +

|

||||

str(default_sleep_time))

|

||||

str(default_loop_time))

|

||||

print(" -v: Be verbose")

|

||||

print(" -C <filename>: Enable SSL/TLS using specified CA cert to verify server")

|

||||

print(" -D <name>: Database name to use, default: " + database_default)

|

||||

|

|

@ -124,19 +136,17 @@ if print_usage or arg_error:

|

|||

print(" -U <name>: Set username for authentication")

|

||||

sys.exit(1 if arg_error else 0)

|

||||

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

logging.basicConfig(format="%(levelname)s: %(message)s")

|

||||

|

||||

def conn_error(msg):

|

||||

# Connection errors that happen while running in an interval loop are

|

||||

# not critical failures, because they can (usually) be retried, or

|

||||

# because they will be recorded as dish state unavailable. They're still

|

||||

# interesting, though, so print them even in non-verbose mode.

|

||||

if sleep_time > 0:

|

||||

print(msg)

|

||||

else:

|

||||

logging.error(msg)

|

||||

class GlobalState:

|

||||

pass

|

||||

|

||||

class DeviceStatusSeries(SeriesHelper):

|

||||

gstate = GlobalState()

|

||||

gstate.dish_channel = None

|

||||

gstate.dish_id = None

|

||||

gstate.pending = 0

|

||||

|

||||

class DeviceStatusSeries(SeriesHelper):

|

||||

class Meta:

|

||||

series_name = "spacex.starlink.user_terminal.status"

|

||||

fields = [

|

||||

|

|

@ -154,33 +164,59 @@ class DeviceStatusSeries(SeriesHelper):

|

|||

"uplink_throughput_bps",

|

||||

"pop_ping_latency_ms",

|

||||

"currently_obstructed",

|

||||

"fraction_obstructed"]

|

||||

"fraction_obstructed",

|

||||

]

|

||||

tags = ["id"]

|

||||

retention_policy = rp

|

||||

|

||||

if "verify_ssl" in icargs and not icargs["verify_ssl"]:

|

||||

# user has explicitly said be insecure, so don't warn about it

|

||||

warnings.filterwarnings("ignore", message="Unverified HTTPS request")

|

||||

def conn_error(msg, *args):

|

||||

# Connection errors that happen in an interval loop are not critical

|

||||

# failures, but are interesting enough to print in non-verbose mode.

|

||||

if loop_time > 0:

|

||||

print(msg % args)

|

||||

else:

|

||||

logging.error(msg, *args)

|

||||

|

||||

influx_client = InfluxDBClient(**icargs)

|

||||

def flush_pending(client):

|

||||

try:

|

||||

DeviceStatusSeries.commit(client)

|

||||

if verbose:

|

||||

print("Data points written: " + str(gstate.pending))

|

||||

gstate.pending = 0

|

||||

except Exception as e:

|

||||

conn_error("Failed writing to InfluxDB database: %s", str(e))

|

||||

return 1

|

||||

|

||||

rc = 0

|

||||

try:

|

||||

dish_channel = None

|

||||

last_id = None

|

||||

last_failed = False

|

||||

return 0

|

||||

|

||||

pending = 0

|

||||

count = 0

|

||||

def get_status_retry():

|

||||

"""Try getting the status at most twice"""

|

||||

|

||||

channel_reused = True

|

||||

while True:

|

||||

try:

|

||||

if dish_channel is None:

|

||||

dish_channel = grpc.insecure_channel("192.168.100.1:9200")

|

||||

stub = spacex.api.device.device_pb2_grpc.DeviceStub(dish_channel)

|

||||

if gstate.dish_channel is None:

|

||||

gstate.dish_channel = grpc.insecure_channel("192.168.100.1:9200")

|

||||

channel_reused = False

|

||||

stub = spacex.api.device.device_pb2_grpc.DeviceStub(gstate.dish_channel)

|

||||

response = stub.Handle(spacex.api.device.device_pb2.Request(get_status={}))

|

||||

status = response.dish_get_status

|

||||

DeviceStatusSeries(

|

||||

id=status.device_info.id,

|

||||

return response.dish_get_status

|

||||

except grpc.RpcError:

|

||||

gstate.dish_channel.close()

|

||||

gstate.dish_channel = None

|

||||

if channel_reused:

|

||||

# If the channel was open already, the connection may have

|

||||

# been lost in the time since prior loop iteration, so after

|

||||

# closing it, retry once, in case the dish is now reachable.

|

||||

if verbose:

|

||||

print("Dish RPC channel error")

|

||||

else:

|

||||

raise

|

||||

|

||||

def loop_body(client):

|

||||

try:

|

||||

status = get_status_retry()

|

||||

DeviceStatusSeries(id=status.device_info.id,

|

||||

hardware_version=status.device_info.hardware_version,

|

||||

software_version=status.device_info.software_version,

|

||||

state=spacex.api.device.dish_pb2.DishState.Name(status.state),

|

||||

|

|

@ -196,61 +232,52 @@ try:

|

|||

pop_ping_latency_ms=status.pop_ping_latency_ms,

|

||||

currently_obstructed=status.obstruction_stats.currently_obstructed,

|

||||

fraction_obstructed=status.obstruction_stats.fraction_obstructed)

|

||||

pending += 1

|

||||

last_id = status.device_info.id

|

||||

last_failed = False

|

||||

gstate.dish_id = status.device_info.id

|

||||

except grpc.RpcError:

|

||||

if dish_channel is not None:

|

||||

dish_channel.close()

|

||||

dish_channel = None

|

||||

if last_failed:

|

||||

if last_id is None:

|

||||

if gstate.dish_id is None:

|

||||

conn_error("Dish unreachable and ID unknown, so not recording state")

|

||||

# When not looping, report this as failure exit status

|

||||

rc = 1

|

||||

return 1

|

||||

else:

|

||||

if verbose:

|

||||

print("Dish unreachable")

|

||||

DeviceStatusSeries(id=last_id, state="DISH_UNREACHABLE")

|

||||

pending += 1

|

||||

else:

|

||||

DeviceStatusSeries(id=gstate.dish_id, state="DISH_UNREACHABLE")

|

||||

|

||||

gstate.pending += 1

|

||||

if verbose:

|

||||

print("Dish RPC channel error")

|

||||

# Retry once, because the connection may have been lost while

|

||||

# we were sleeping

|

||||

last_failed = True

|

||||

continue

|

||||

if verbose:

|

||||

print("Samples queued: " + str(pending))

|

||||

count += 1

|

||||

if count > 5:

|

||||

print("Data points queued: " + str(gstate.pending))

|

||||

if gstate.pending >= flush_limit:

|

||||

return flush_pending(client)

|

||||

|

||||

return 0

|

||||

|

||||

if "verify_ssl" in icargs and not icargs["verify_ssl"]:

|

||||

# user has explicitly said be insecure, so don't warn about it

|

||||

warnings.filterwarnings("ignore", message="Unverified HTTPS request")

|

||||

|

||||

signal.signal(signal.SIGTERM, handle_sigterm)

|

||||

influx_client = InfluxDBClient(**icargs)

|

||||

try:

|

||||

if pending:

|

||||

DeviceStatusSeries.commit(influx_client)

|

||||

rc = 0

|

||||

if verbose:

|

||||

print("Samples written: " + str(pending))

|

||||

pending = 0

|

||||

except Exception as e:

|

||||

conn_error("Failed to write: " + str(e))

|

||||

rc = 1

|

||||

count = 0

|

||||

if sleep_time > 0:

|

||||

time.sleep(sleep_time)

|

||||

next_loop = time.monotonic()

|

||||

while True:

|

||||

rc = loop_body(influx_client)

|

||||

if loop_time > 0:

|

||||

now = time.monotonic()

|

||||

next_loop = max(next_loop + loop_time, now)

|

||||

time.sleep(next_loop - now)

|

||||

else:

|

||||

break

|

||||

finally:

|

||||

except Terminated:

|

||||

pass

|

||||

finally:

|

||||

# Flush on error/exit

|

||||

try:

|

||||

if pending:

|

||||

DeviceStatusSeries.commit(influx_client)

|

||||

rc = 0

|

||||

if verbose:

|

||||

print("Samples written: " + str(pending))

|

||||

except Exception as e:

|

||||

conn_error("Failed to write: " + str(e))

|

||||

rc = 1

|

||||

if gstate.pending:

|

||||

rc = flush_pending(influx_client)

|

||||

influx_client.close()

|

||||

if dish_channel is not None:

|

||||

dish_channel.close()

|

||||

if gstate.dish_channel is not None:

|

||||

gstate.dish_channel.close()

|

||||

|

||||

sys.exit(rc)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

main()

|

||||

|

|

|

|||

|

|

@ -3,14 +3,15 @@

|

|||

#

|

||||

# Publish Starlink user terminal status info to a MQTT broker.

|

||||

#

|

||||

# This script pulls the current status once and publishes it to the

|

||||

# specified MQTT broker.

|

||||

# This script pulls the current status and publishes it to the

|

||||

# specified MQTT broker either once or in a periodic loop.

|

||||

#

|

||||

######################################################################

|

||||

|

||||

import sys

|

||||

import getopt

|

||||

import logging

|

||||

import sys

|

||||

import time

|

||||

|

||||

try:

|

||||

import ssl

|

||||

|

|

@ -18,28 +19,32 @@ try:

|

|||

except ImportError:

|

||||

ssl_ok = False

|

||||

|

||||

import paho.mqtt.publish

|

||||

|

||||

import grpc

|

||||

import paho.mqtt.publish

|

||||

|

||||

import spacex.api.device.device_pb2

|

||||

import spacex.api.device.device_pb2_grpc

|

||||

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "hn:p:C:ISP:U:")

|

||||

except getopt.GetoptError as err:

|

||||

def main():

|

||||

arg_error = False

|

||||

|

||||

try:

|

||||

opts, args = getopt.getopt(sys.argv[1:], "hn:p:t:vC:ISP:U:")

|

||||

except getopt.GetoptError as err:

|

||||

print(str(err))

|

||||

arg_error = True

|

||||

|

||||

print_usage = False

|

||||

host_default = "localhost"

|

||||

mqargs = {"hostname": host_default}

|

||||

username = None

|

||||

password = None

|

||||

print_usage = False

|

||||

verbose = False

|

||||

default_loop_time = 0

|

||||

loop_time = default_loop_time

|

||||

host_default = "localhost"

|

||||

mqargs = {"hostname": host_default}

|

||||

username = None

|

||||

password = None

|

||||

|

||||

if not arg_error:

|

||||

if not arg_error:

|

||||