Documentation updates

A bunch of content from the README and get_history_notes.txt has been moved to the Wiki, as it is not critical to understanding how to install or use the scripts. Move the checked in Grafana dashboard into a subdirectory in a feeble attempt to encourage other people to submit more of them. Change the officially supported Docker image to the one published to GitHub Package repository by this project's workflow task.

This commit is contained in:

parent

46c8604dfc

commit

859dc84b88

4 changed files with 57 additions and 72 deletions

102

README.md

102

README.md

|

|

@ -9,46 +9,24 @@ Most of the scripts here are [Python](https://www.python.org/) scripts. To use t

|

||||||

|

|

||||||

All the tools that pull data from the dish expect to be able to reach it at the dish's fixed IP address of 192.168.100.1, as do the Starlink [Android app](https://play.google.com/store/apps/details?id=com.starlink.mobile), [iOS app](https://apps.apple.com/us/app/starlink/id1537177988), and the browser app you can run directly from http://192.168.100.1. When using a router other than the one included with the Starlink installation kit, this usually requires some additional router configuration to make it work. That configuration is beyond the scope of this document, but if the Starlink app doesn't work on your home network, then neither will these scripts. That being said, you do not need the Starlink app installed to make use of these scripts. See [here](https://github.com/starlink-community/knowledge-base/wiki#using-your-own-router) for more detail on this.

|

All the tools that pull data from the dish expect to be able to reach it at the dish's fixed IP address of 192.168.100.1, as do the Starlink [Android app](https://play.google.com/store/apps/details?id=com.starlink.mobile), [iOS app](https://apps.apple.com/us/app/starlink/id1537177988), and the browser app you can run directly from http://192.168.100.1. When using a router other than the one included with the Starlink installation kit, this usually requires some additional router configuration to make it work. That configuration is beyond the scope of this document, but if the Starlink app doesn't work on your home network, then neither will these scripts. That being said, you do not need the Starlink app installed to make use of these scripts. See [here](https://github.com/starlink-community/knowledge-base/wiki#using-your-own-router) for more detail on this.

|

||||||

|

|

||||||

Running the scripts within a [Docker](https://www.docker.com/) container requires Docker to be installed. Information about how to install that can be found at https://docs.docker.com/engine/install/

|

Running the scripts within a [Docker](https://www.docker.com/) container requires Docker to be installed. Information about how to install that can be found at https://docs.docker.com/engine/install/. See below for how to pull the starlink-grpc-tools container image.

|

||||||

|

|

||||||

`dish_json_text.py` operates on a JSON format data representation of the protocol buffer messages, such as that output by [gRPCurl](https://github.com/fullstorydev/grpcurl). The command lines below assume `grpcurl` is installed in the runtime PATH. If that's not the case, just substitute in the full path to the command.

|

### Required Python modules (for non-Docker usage)

|

||||||

|

|

||||||

### Required Python modules

|

The easiest way to get the Python modules used by the scripts is to do the following, which will install latest versions of a superset of the required modules:

|

||||||

|

|

||||||

If you don't care about the details or minimizing your package requirements, you can skip the rest of this section and just do this to install latest versions of a superset of required modules:

|

|

||||||

```shell script

|

```shell script

|

||||||

pip install --upgrade -r requirements.txt

|

pip install --upgrade -r requirements.txt

|

||||||

```

|

```

|

||||||

|

|

||||||

The scripts that don't use `grpcurl` to pull data require the `grpcio` Python package at runtime and the optional step of generating the gRPC protocol module code requires the `grpcio-tools` package. Information about how to install both can be found at https://grpc.io/docs/languages/python/quickstart/. If you skip generation of the gRPC protocol modules, the scripts will instead require the `yagrc` Python package. Information about how to install that is at https://github.com/sparky8512/yagrc.

|

If you really care about the details here or wish to minimize your package requirements, you can find more detail about which specific modules are required for what usage in [this Wiki article](https://github.com/sparky8512/starlink-grpc-tools/wiki/Python-Module-Dependencies).

|

||||||

|

|

||||||

The scripts that use [MQTT](https://mqtt.org/) for output require the `paho-mqtt` Python package. Information about how to install that can be found at https://www.eclipse.org/paho/index.php?page=clients/python/index.php

|

### InfluxDB 2.x

|

||||||

|

|

||||||

The scripts that use [InfluxDB](https://www.influxdata.com/products/influxdb/) for output require the `influxdb` Python package. Information about how to install that can be found at https://github.com/influxdata/influxdb-python. Note that this is the (slightly) older version of the InfluxDB client Python module, not the InfluxDB 2.0 client. It can still be made to work with an InfluxDB 2.0 server, but doing so requires using `influx v1` [CLI commands](https://docs.influxdata.com/influxdb/v2.0/reference/cli/influx/v1/) on the server to map the 1.x username, password, and database names to their 2.0 equivalents.

|

The script that records data to InfluxDB uses the (slightly) older version of the InfluxDB client Python module, not the InfluxDB 2.x client. It can still be made to work with an InfluxDB 2.0 server (and probably later 2.x versions), but doing so requires using `influx v1` [CLI commands](https://docs.influxdata.com/influxdb/v2.0/reference/cli/influx/v1/) on the server to map the 1.x username, password, and database names to their 2.x equivalents.

|

||||||

|

|

||||||

The `dish_obstruction_map.py` script requires the `pypng` Python package. Information about how to install that can be found at https://pypng.readthedocs.io/en/latest/png.html#installation-and-overview

|

### Generating the gRPC protocol modules (for non-Docker usage)

|

||||||

|

|

||||||

Note that the Python package versions available from various Linux distributions (ie: installed via `apt-get` or similar) tend to run a bit behind those available to install via `pip`. While the distro packages should work OK as long as they aren't extremely old, they may not work as well as the later versions.

|

This step is no longer required, nor is it particularly recommended, so the details have been moved to [this Wiki article](https://github.com/sparky8512/starlink-grpc-tools/wiki/gRPC-Protocol-Modules).

|

||||||

|

|

||||||

### Generating the gRPC protocol modules

|

|

||||||

|

|

||||||

This step is no longer required, as the grpc scripts can now get the protocol module classes at run time via reflection, but generating the protocol modules will improve script startup time, and it would be a good idea to at least stash away the protoset file emitted by `grpcurl` in case SpaceX ever turns off server reflection in the dish software. That being said, it's probably less error prone to use the run time reflection support rather than using the files generated in this step, so use your own judgement as to whether or not to proceed with this.

|

|

||||||

|

|

||||||

The grpc scripts require some generated code to support the specific gRPC protocol messages used. These would normally be generated from .proto files that specify those messages, but to date (2020-Dec), SpaceX has not publicly released such files. The gRPC service running on the dish appears to have [server reflection](https://github.com/grpc/grpc/blob/master/doc/server-reflection.md) enabled, though. `grpcurl` can use that to extract a protoset file, and the `protoc` compiler can use that to make the necessary generated code:

|

|

||||||

```shell script

|

|

||||||

grpcurl -plaintext -protoset-out dish.protoset 192.168.100.1:9200 describe SpaceX.API.Device.Device

|

|

||||||

mkdir src

|

|

||||||

cd src

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/device.proto

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/common/status/status.proto

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/command.proto

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/common.proto

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/dish.proto

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/wifi.proto

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/wifi_config.proto

|

|

||||||

python3 -m grpc_tools.protoc --descriptor_set_in=../dish.protoset --python_out=. --grpc_python_out=. spacex/api/device/transceiver.proto

|

|

||||||

```

|

|

||||||

Then move the resulting files to where the Python scripts can find them in the import path, such as in the same directory as the scripts themselves.

|

|

||||||

|

|

||||||

## Usage

|

## Usage

|

||||||

|

|

||||||

|

|

@ -56,16 +34,16 @@ Of the 3 groups below, the grpc scripts are really the only ones being actively

|

||||||

|

|

||||||

### The grpc scripts

|

### The grpc scripts

|

||||||

|

|

||||||

This set of scripts includes `dish_grpc_text.py`, `dish_grpc_influx.py`, `dish_grpc_sqlite.py`, and `dish_grpc_mqtt.py`. They mostly support the same functionality, but write their output in different ways. `dish_grpc_text.py` writes data to standard output, `dish_grpc_influx.py` sends it to an InfluxDB server, `dish_grpc_sqlite.py` writes it a sqlite database, and `dish_grpc_mqtt.py` sends it to a MQTT broker.

|

This set of scripts includes `dish_grpc_text.py`, `dish_grpc_influx.py`, `dish_grpc_sqlite.py`, and `dish_grpc_mqtt.py`. They mostly support the same functionality, but write their output in different ways. `dish_grpc_text.py` writes data to standard output, `dish_grpc_influx.py` sends it to an InfluxDB server, `dish_grpc_sqlite.py` writes it to a sqlite database, and `dish_grpc_mqtt.py` sends it to a MQTT broker.

|

||||||

|

|

||||||

All 4 scripts support processing status data and/or history data in various modes. The status data is mostly what appears related to the dish in the Debug Data section of the Starlink app, whereas most of the data displayed in the Statistics page of the Starlink app comes from the history data. Specific status or history data groups can be selected by including their mode names on the command line. Run the scripts with `-h` command line option to get a list of available modes. See the documentation at the top of `starlink_grpc.py` for detail on what each of the fields means within each mode group.

|

All 4 scripts support processing status data and/or history data in various modes. The status data is mostly what appears related to the dish in the Debug Data section of the Starlink app, whereas most of the data displayed in the Statistics page of the Starlink app comes from the history data. Specific status or history data groups can be selected by including their mode names on the command line. Run the scripts with `-h` command line option to get a list of available modes. See the documentation at the top of `starlink_grpc.py` for detail on what each of the fields means within each mode group.

|

||||||

|

|

||||||

For example, all the currently available status groups can be output by doing:

|

For example, data from all the currently available status groups can be output by doing:

|

||||||

```shell script

|

```shell script

|

||||||

python3 dish_grpc_text.py status obstruction_detail alert_detail

|

python3 dish_grpc_text.py status obstruction_detail alert_detail

|

||||||

```

|

```

|

||||||

|

|

||||||

By default, `dish_grpc_text.py` (and `dish_json_text.py`, described below) will output in CSV format. You can use the `-v` option to instead output in a (slightly) more human-readable format.

|

By default, `dish_grpc_text.py` will output in CSV format. You can use the `-v` option to instead output in a (slightly) more human-readable format.

|

||||||

|

|

||||||

By default, all of these scripts will pull data once, send it off to the specified data backend, and then exit. They can instead be made to run in a periodic loop by passing a `-t` option to specify loop interval, in seconds. For example, to capture status information to a InfluxDB server every 30 seconds, you could do something like this:

|

By default, all of these scripts will pull data once, send it off to the specified data backend, and then exit. They can instead be made to run in a periodic loop by passing a `-t` option to specify loop interval, in seconds. For example, to capture status information to a InfluxDB server every 30 seconds, you could do something like this:

|

||||||

```shell script

|

```shell script

|

||||||

|

|

@ -88,7 +66,7 @@ python3 dish_grpc_text.py -t 60 -o 60 ping_drop

|

||||||

```

|

```

|

||||||

will poll history data once per minute, but compute statistics only once per hour. This also reduces data loss due to a dish reboot, since the `-o` option will aggregate across reboots, too.

|

will poll history data once per minute, but compute statistics only once per hour. This also reduces data loss due to a dish reboot, since the `-o` option will aggregate across reboots, too.

|

||||||

|

|

||||||

### The obstruction map script

|

#### The obstruction map script

|

||||||

|

|

||||||

`dish_obstruction_map.py` is a little different in that it doesn't write to a database, but rather writes PNG images to the local filesystem. To get a single image of the current obstruction map using the default colors, you can do the following:

|

`dish_obstruction_map.py` is a little different in that it doesn't write to a database, but rather writes PNG images to the local filesystem. To get a single image of the current obstruction map using the default colors, you can do the following:

|

||||||

```shell script

|

```shell script

|

||||||

|

|

@ -103,6 +81,8 @@ Run it with the `-h` command line option for full usage details, including contr

|

||||||

|

|

||||||

### The JSON parser script

|

### The JSON parser script

|

||||||

|

|

||||||

|

`dish_json_text.py` operates on a JSON format data representation of the protocol buffer messages, such as that output by [gRPCurl](https://github.com/fullstorydev/grpcurl). The command lines below assume `grpcurl` is installed in the runtime PATH. If that's not the case, just substitute in the full path to the command.

|

||||||

|

|

||||||

`dish_json_text.py` is similar to `dish_grpc_text.py`, but it takes JSON format input from a file instead of pulling it directly from the dish via grpc call. It also does not support the status info modes, because those are easy enough to interpret directly from the JSON data. The easiest way to use it is to pipe the `grpcurl` command directly into it. For example:

|

`dish_json_text.py` is similar to `dish_grpc_text.py`, but it takes JSON format input from a file instead of pulling it directly from the dish via grpc call. It also does not support the status info modes, because those are easy enough to interpret directly from the JSON data. The easiest way to use it is to pipe the `grpcurl` command directly into it. For example:

|

||||||

```shell script

|

```shell script

|

||||||

grpcurl -plaintext -d {\"get_history\":{}} 192.168.100.1:9200 SpaceX.API.Device.Device/Handle | python3 dish_json_text.py ping_drop

|

grpcurl -plaintext -d {\"get_history\":{}} 192.168.100.1:9200 SpaceX.API.Device.Device/Handle | python3 dish_json_text.py ping_drop

|

||||||

|

|

@ -128,41 +108,63 @@ python3 poll_history.py

|

||||||

```

|

```

|

||||||

Possibly more simple examples to come, as the other scripts have started getting a bit complicated.

|

Possibly more simple examples to come, as the other scripts have started getting a bit complicated.

|

||||||

|

|

||||||

## Docker for InfluxDB ( & MQTT under development )

|

## Running with Docker

|

||||||

|

|

||||||

Initialization of the container can be performed with the following command:

|

The supported docker image for this project is now the one hosted in the [GitHub Packages repository](https://github.com/sparky8512/starlink-grpc-tools/pkgs/container/starlink-grpc-tools/versions).

|

||||||

|

|

||||||

|

You can get the "latest" image with the following command:

|

||||||

|

```shell script

|

||||||

|

docker pull ghcr.io/sparky8512/starlink-grpc-tools

|

||||||

|

```

|

||||||

|

This will pull the image tagged as "latest". There should also be images for all recent tagged releases of this project, but those tend to be few and far between, so the most recent one will often be missing some important changes. See the package repository for a full list of tagged images.

|

||||||

|

|

||||||

|

You can run it with the following:

|

||||||

|

```shell script

|

||||||

|

docker run --name='starlink-grpc-tools' ghcr.io/sparky8512/starlink-grpc-tools <script_name>.py <script args...>

|

||||||

|

```

|

||||||

|

For example, the following will print current status info and then exit:

|

||||||

|

```shell script

|

||||||

|

docker run --name='starlink-grpc-tools' ghcr.io/sparky8512/starlink-grpc-tools dish_grpc_text.py -v status alert_detail

|

||||||

|

```

|

||||||

|

Of course, you can change the name to whatever you want instead, and use other docker run options, as appropriate.

|

||||||

|

|

||||||

|

The default command is `dish_grpc_influx.py status alert_detail`, which is really only useful if you pass in environment variables with user and database info, such as:

|

||||||

|

```shell script

|

||||||

|

docker run --name='starlink-grpc-tools' -e INFLUXDB_HOST={InfluxDB Hostname} \

|

||||||

|

-e INFLUXDB_PORT={Port, 8086 usually} \

|

||||||

|

-e INFLUXDB_USER={Optional, InfluxDB Username} \

|

||||||

|

-e INFLUXDB_PWD={Optional, InfluxDB Password} \

|

||||||

|

-e INFLUXDB_DB={Pre-created DB name, starlinkstats works well} \

|

||||||

|

ghcr.io/sparky8512/starlink-grpc-tools

|

||||||

|

```

|

||||||

|

|

||||||

|

When running in the background, you will probably want to specify a `-t` script option, to run in a loop, otherwise it will exit right away and leave an inactive container. For example:

|

||||||

```shell script

|

```shell script

|

||||||

docker run -d -t --name='starlink-grpc-tools' -e INFLUXDB_HOST={InfluxDB Hostname} \

|

docker run -d -t --name='starlink-grpc-tools' -e INFLUXDB_HOST={InfluxDB Hostname} \

|

||||||

-e INFLUXDB_PORT={Port, 8086 usually} \

|

-e INFLUXDB_PORT={Port, 8086 usually} \

|

||||||

-e INFLUXDB_USER={Optional, InfluxDB Username} \

|

-e INFLUXDB_USER={Optional, InfluxDB Username} \

|

||||||

-e INFLUXDB_PWD={Optional, InfluxDB Password} \

|

-e INFLUXDB_PWD={Optional, InfluxDB Password} \

|

||||||

-e INFLUXDB_DB={Pre-created DB name, starlinkstats works well} \

|

-e INFLUXDB_DB={Pre-created DB name, starlinkstats works well} \

|

||||||

neurocis/starlink-grpc-tools dish_grpc_influx.py -v status alert_detail

|

ghcr.io/sparky8512/starlink-grpc-tools -v -t 60 status alert_detail

|

||||||

```

|

```

|

||||||

|

|

||||||

The `-t` option to `docker run` will prevent Python from buffering the script's standard output and can be omitted if you don't care about seeing the verbose output in the container logs as soon as it is printed.

|

The `-t` option to `docker run` will prevent Python from buffering the script's standard output and can be omitted if you don't care about seeing the verbose output in the container logs as soon as it is printed.

|

||||||

|

|

||||||

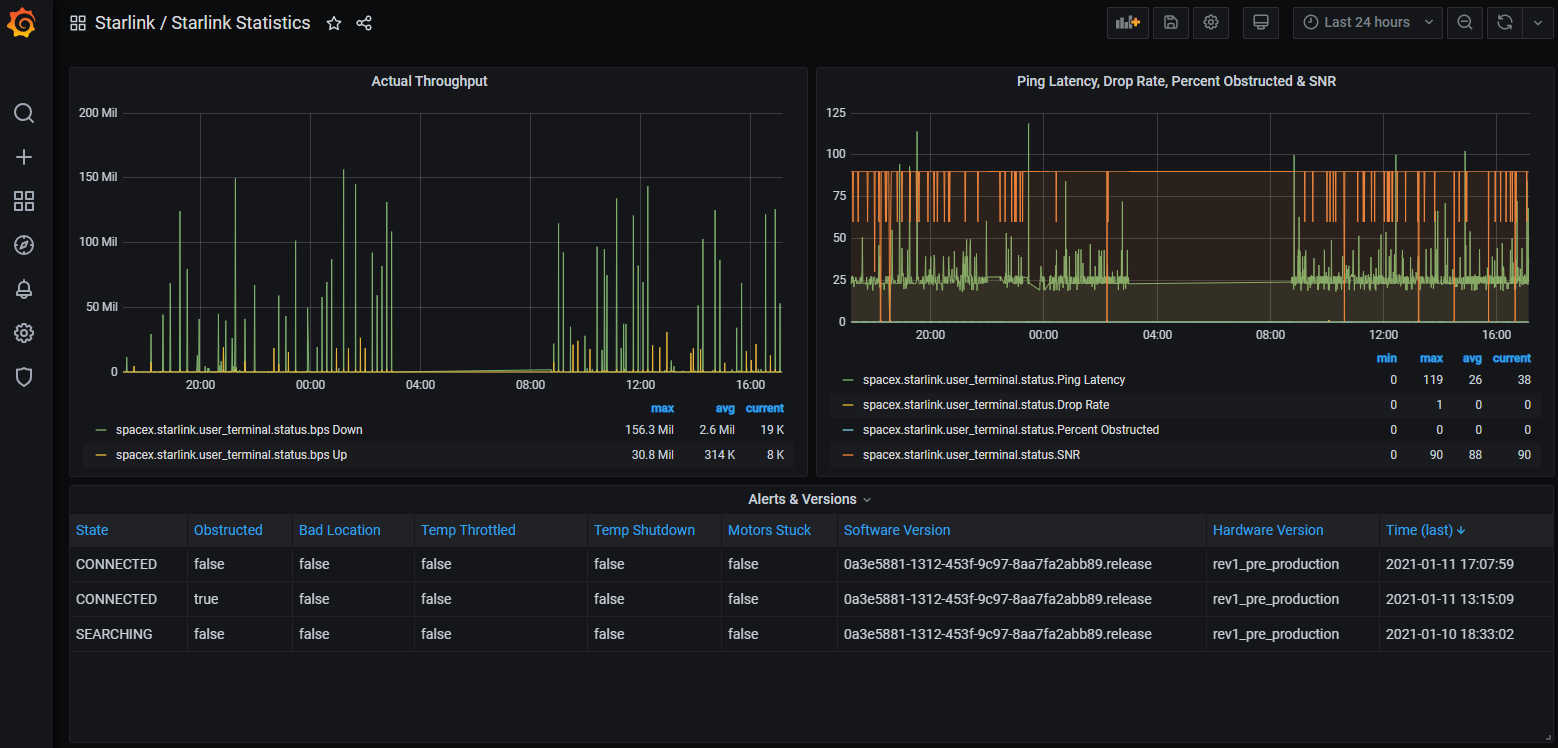

The `dish_grpc_influx.py -v status alert_detail` is optional and omitting it will run same but not verbose, or you can replace it with one of the other scripts if you wish to run that instead, or use other command line options. There is also a `GrafanaDashboard - Starlink Statistics.json` which can be imported to get some charts like:

|

If there is some problem with accessing the image from the GitHub Packages repository, there is also an image available on Docker Hub, which can be accessed as `neurocis/starlink-grpc-tools`, but note that that image may not be as up to date with changes as the supported one.

|

||||||

|

|

||||||

|

## Dashboards

|

||||||

|

|

||||||

You'll probably want to run with the `-t` option to `dish_grpc_influx.py` to collect status information periodically for this to be meaningful.

|

Several users have built dashboards for displaying data collected by the scripts in this project. Information on those can be found in [this Wiki article](https://github.com/sparky8512/starlink-grpc-tools/wiki/Dashboards). If you have one you would like to add, please feel free to edit the Wiki page to do so.

|

||||||

|

|

||||||

## To Be Done (Maybe)

|

Note that feeding a dashboard will likely need the `-t` script option to `dish_grpc_influx.py` in order to collect status and/or history information periodically.

|

||||||

|

|

||||||

|

## To Be Done (Maybe, but Probably Not)

|

||||||

|

|

||||||

|

The [Wiki for this GitHub project](https://github.com/sparky8512/starlink-grpc-tools/wiki) has a little more information, and was originally planned to have more detail on some aspects of the history data, but that's mostly been obsoleted by changes to the gRPC service. It still may be updated some day with more use case examples or other information. In the mean time, it is configured as editable by anyone with a GitHub login, so if you have relevant content you believe to be useful, feel free to add it.

|

||||||

|

|

||||||

There are `reboot` and `dish_stow` requests in the Device protocol, too, so it should be trivial to write a command that initiates dish reboot and stow operations. These are easy enough to do with `grpcurl`, though, as there is no need to parse through the response data. For that matter, they're easy enough to do with the Starlink app.

|

There are `reboot` and `dish_stow` requests in the Device protocol, too, so it should be trivial to write a command that initiates dish reboot and stow operations. These are easy enough to do with `grpcurl`, though, as there is no need to parse through the response data. For that matter, they're easy enough to do with the Starlink app.

|

||||||

|

|

||||||

No further data collection functionality is planned at this time. If there's something you'd like to see added, please feel free to open a feature request issue. Bear in mind, though, that functionality will be limited to that which the Starlink gRPC services support. In general, those services are limited to what is required by the Starlink app, so unless the app has some related feature, it is unlikely the gRPC services will be sufficient to implement it in these tools.

|

No further data collection functionality is planned at this time. If there's something you'd like to see added, please feel free to open a feature request issue. Bear in mind, though, that functionality will be limited to that which the Starlink gRPC services support. In general, those services are limited to what is required by the Starlink app, so unless the app has some related feature, it is unlikely the gRPC services will be sufficient to implement it in these tools.

|

||||||

|

|

||||||

## Other Tidbits

|

|

||||||

|

|

||||||

The Starlink Android app actually uses port 9201 instead of 9200. Both appear to expose the same gRPC service, but the one on port 9201 uses [gRPC-Web](https://github.com/grpc/grpc/blob/master/doc/PROTOCOL-WEB.md), which can use HTTP/1.1, whereas the one on port 9200 uses HTTP/2, which is what most gRPC tools expect.

|

|

||||||

|

|

||||||

The Starlink router also exposes a gRPC service, on ports 9000 (HTTP/2.0) and 9001 (HTTP/1.1).

|

|

||||||

|

|

||||||

The file `get_history_notes.txt` has my original ramblings on how to interpret the history buffer data (with the JSON format naming). It may be of interest if you want to pull the `get_history` grpc data directly and don't want to dig through the convoluted logic in the `starlink_grpc` module.

|

|

||||||

|

|

||||||

## Related Projects

|

## Related Projects

|

||||||

|

|

||||||

[ChuckTSI's Better Than Nothing Web Interface](https://github.com/ChuckTSI/BetterThanNothingWebInterface) uses grpcurl and PHP to provide a spiffy web UI for some of the same data this project works on.

|

[ChuckTSI's Better Than Nothing Web Interface](https://github.com/ChuckTSI/BetterThanNothingWebInterface) uses grpcurl and PHP to provide a spiffy web UI for some of the same data this project works on.

|

||||||

|

|

|

||||||

5

dashboards/README.md

Normal file

5

dashboards/README.md

Normal file

|

|

@ -0,0 +1,5 @@

|

||||||

|

This directory contains user-contributed dashboards for use with the data collected by the scripts in this project.

|

||||||

|

|

||||||

|

If you have built a dashboard you think is useful, please consider filing a pull request to have it added here.

|

||||||

|

|

||||||

|

Alternatively, just post it anywhere and update the [Dashboards Wiki article](https://github.com/sparky8512/starlink-grpc-tools/wiki/Dashboards) to point to it. This project's Wiki is configured to be editable by anyone with a GitHub login, so no special permissions are needed for doing so.

|

||||||

|

|

@ -1,22 +0,0 @@

|

||||||

This file contains notes about the data returned from a "get_history" gRPC request sent to a Starlink dish, based on observing the data over time and comparing it to what shows up in the Statistics page of the Starlink Android app (which appears to use this same data via the gRPC service). The names below are from the JSON format data that grpcurl spits out, so are slightly different from what would show up in .proto files that describe the data structures.

|

|

||||||

|

|

||||||

DISCLAIMER: This is not official documentation of this data format, and some of what is written here could be a misinterpretation of what the data actually means, and thus be utterly wrong.

|

|

||||||

|

|

||||||

Sample points are one per second, the entire data set covers up to 12 hours, and it is arranged in what appears to be a ring buffer. It's not clear what exactly a "ping" means in this data, or between which points it's measuring RTT, but the latency values correspond roughly with ICMP echo ping times from a PC on my home network and IP address 8.8.8.8, so it's presumably at least all the way through a ground station.

|

|

||||||

|

|

||||||

"current" is the total number of samples that have been written to the ring buffer, irrespective of buffer wrap. Since the samples are written once per second and the buffer resets on dish reboot, this value will be roughly equal to dish uptime in seconds. This value can be used to index into the data arrays to find the location of the current data sample. The data arrays are represented as fixed size (currently 43200 element) ring buffers. In other words: If "current" is less than 43200, then the valid data samples can be found in each array at index 0 (oldest data sample) through (and including) index "current" - 1 (latest data sample). If "current" is greater or equal to 43200, then all array elements contain valid data, but it will be ordered within each array as index "current" mod 43200 (oldest data sample) through index 43199, then index 0 through index ("current" mod 43200) - 1 (latest data sample). If the data arrays are different size in the future, then use that size in the modulo arithmetic instead of 43200.

|

|

||||||

|

|

||||||

"popPingDropRate": Fraction of lost ping replies per sample. Given the small fractions reported for some, this implies the dish is collecting stats for many pings per second, and just reporting totals and/or averages per second, but that's just conjecture on my part.

|

|

||||||

|

|

||||||

"popPingLatencyMs": Round trip time, in milliseconds. NOTE: Whenever the popPingDropRate value is 1 for a sample, it appears the popPingLatencyMs value will just repeat prior value rather than leaving a hole in the data.

|

|

||||||

|

|

||||||

"downlinkThroughputBps", "uplinkThroughputBps": The app labels these "Download Usage" and "Upload Usage", so this is presumably number of bits actually used, not what is available to be used. From the max values, it appears to be in bits per second.

|

|

||||||

|

|

||||||

"snr": Signal to noise ratio, capped at 9.

|

|

||||||

|

|

||||||

"scheduled": When false, ping drop shows up as "No satellites" in app, so presumably true means at least 1 satellite is within geometric view of the dish.

|

|

||||||

|

|

||||||

"obstructed": When true, ping drop shows up as "Obstructed" in app, so presumably means dish has somehow detected the view in the direction of the satellite is obstructed.

|

|

||||||

|

|

||||||

There is no specific data field that obviously correlates with "Beta Downtime", so I'm guessing those are the points where "popPingDropRate" is 1, but the reason could not be identified because "scheduled" is true and "obstructed" is false.

|

|

||||||

|

|

||||||

Loading…

Reference in a new issue